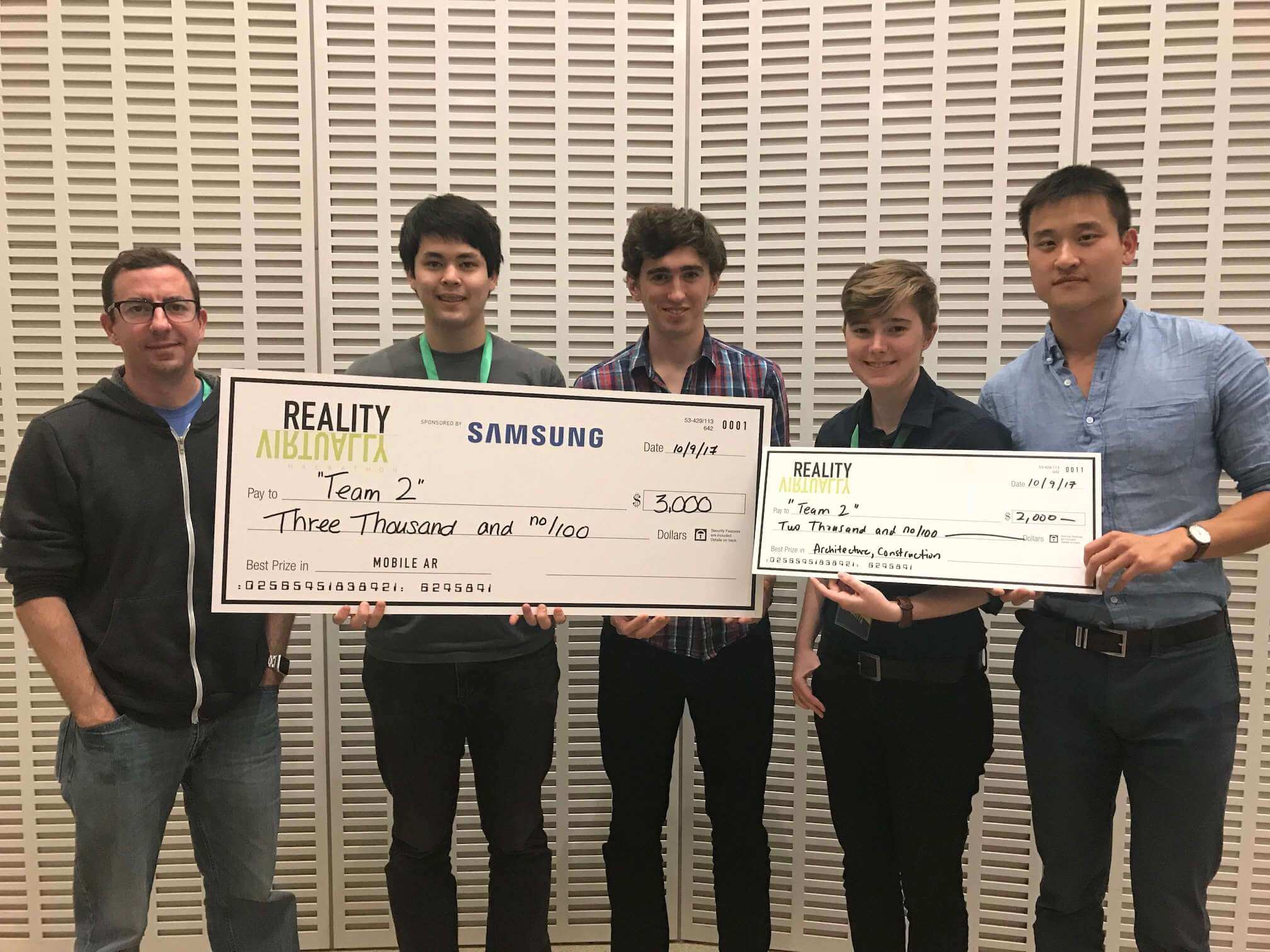

From left to right: Joe Crotchett, Avery Lamp, myself, Abbey Ayers, and Xiao Ling.

In the past decade, technology has enabled people to connect and collaborate with others who are geographically dispersed. People thousands of miles apart can now video chat through FaceTime, write together with Google Docs, or battle friends across the globe in Fortnite.

Strangely, technology has done very little to help connect people who are physically in the same space. Commuters on the subway — staring at their phones — look very similar to the classic image of a train full of people reading the paper. On our screens today, everyone is in their own separate worlds.

Augmented Reality (AR) has the chance to change this. AR enables us to add digital content to the physical world. Unfortunately, current AR systems are limited to single person experiences. Right now, AR does very little to enable sharing and collaboration in a single room. But that will soon change.

Physically together, digitally apart.

Rumor has it that Apple will introduce "multiplayer AR" tomorrow at WWDC, their yearly developer conference.

For bragging rights, I want to mention that Avery Lamp and I (along with some new friends) got multi-user AR working on iOS devices 8 months ago.

In October 2017, we competed in the Reality Virtually hackathon at MIT. We built Collaborative AR and won two separate prizes totaling more than $5,000.

The team included Abby Ayers, Xiao Ling, Joe Crotchett, Avery Lamp, and I. Together, we were "Team 2". Not the second team... that was our chosen team name. Here's what we made:

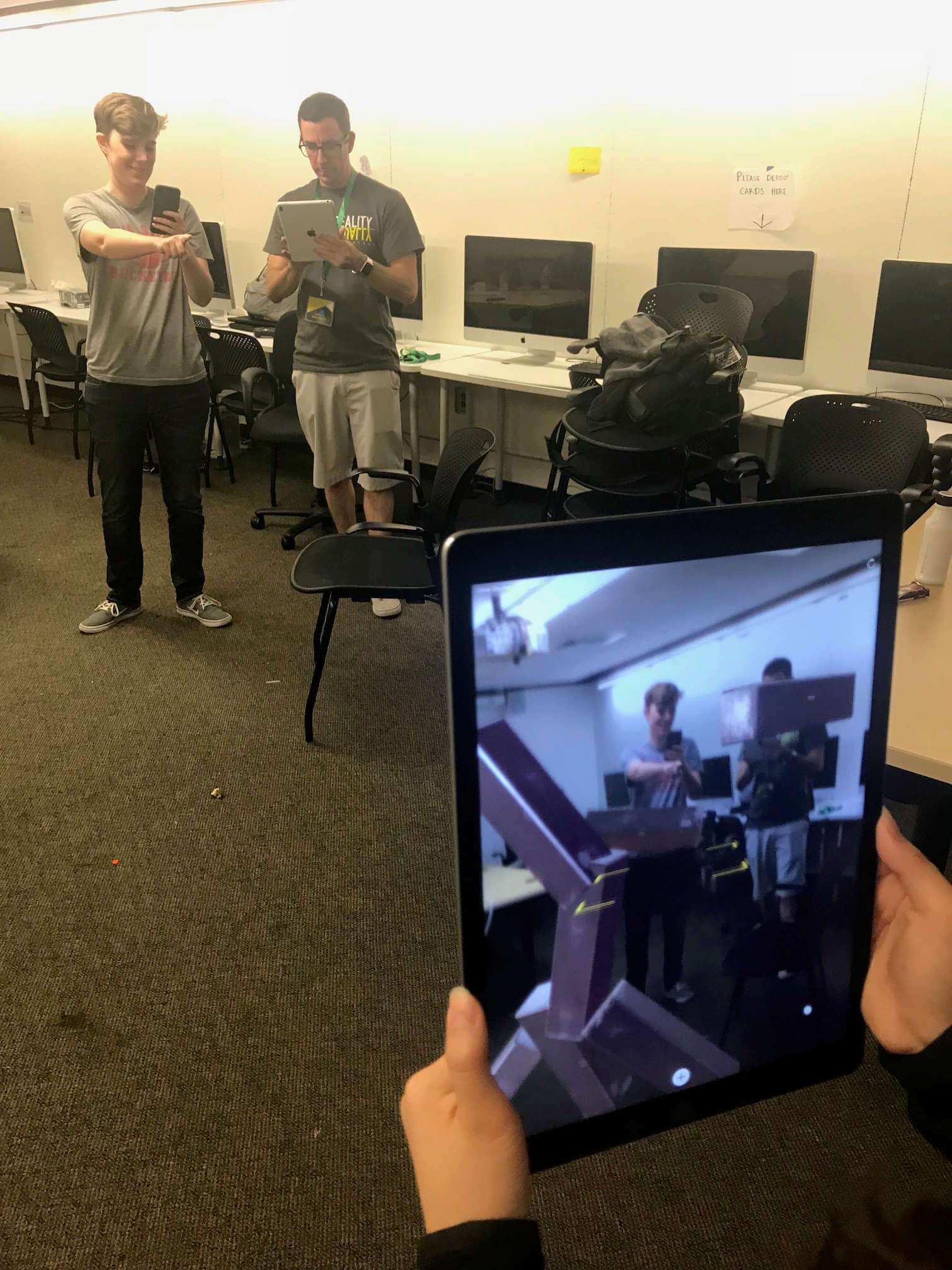

We built (what we believe to be) the first working version of multi-user AR using ARKit. Our app allows multiple people to build 3D creations in AR. Each user, in the same physical space as each other, uses their iPhone or iPad to add objects to a shared project.

Our implementation allows any number of iOS devices to share the same space, and it requires no server whatsoever. All communication between devices happened peer-to-peer over WiFi or Bluetooth.

Watching Abbey and Joe design a structure from a third device.

The entire application is written in Swift (making good use of MultipeerConnectivity and ARKit). However, our method could pretty easily be cross platform with Android. Tomorrow, Apple is going to release a multiuser AR system that will be iOS only. And Google just announced a framework for ARCore that will be cross-platform, but it requires a cloud backend. I think our design has advantages to both: the ability to be cross-platform without the need for a server.

A key feature of the future of AR will be collaborating locally in a shared space — and we were one of the first ones to demo that possibility on modern mobile devices. One of the most exciting parts of getting this project to work was defying the documentation. Apple's ARKit spec clearly states that point-clouds will not be the same between devices, and therefore you can't have multiple devices operating in the same coordinate system. But we were able to hack around that limitation.

Hopefully tomorrow Apple will introduce more than just multiplayer games in AR. Our project was called Collaborative AR because it was about creating something together, not just consuming media together. It's way bigger than just gaming together.

Sometime soon, we will all be able to create together in the same physical space.

PS: If you want to try our implementation yourself, our code is open source, and available on Github here.